Two of the most important conferences for social robotics are Robophilosophy and ICSR. After Robophilosophy, a biennial, was last held in Helsinki in August 2022, ICSR is now coming up in Florence (13 – 16 December 2022). “The 14th International Conference on Social Robotics (ICSR 2022) brings together researchers and practitioners working on the interaction between humans and intelligent robots and on the integration of social robots into our society. … The theme of this year’s conference is Social Robots for Assisted Living and Healthcare, emphasising on the increasing importance of social robotics in human daily living and society.” (Website ICSR) The committee sent out notifications by October 15, 2022. The paper “The CARE-MOMO Project” by Oliver Bendel and Marc Heimann was accepted. This is a project that combines machine ethics and social robotics. The invention of the morality menu was transferred to a care robot for the first time. The care recipient can use sliders on the display to determine how he or she wants to be treated. This allows them to transfer their moral and social beliefs and ideas to the machine. The morality module (MOMO) is intended for the Lio assistance robot from F&P Robotics. The result will be presented at the end of October 2022 at the company headquarters in Glattbrugg near Zurich. More information on the conference via www.icsr2022.it.

The Morality Menu Project

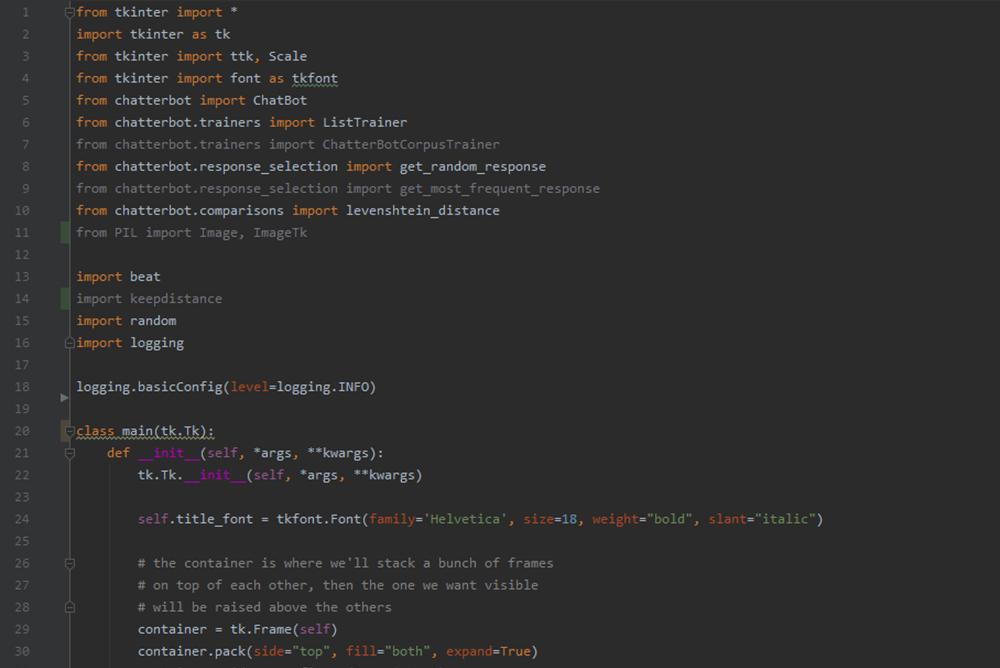

From 18 to 21 August 2020, the Robophilosophy conference took place. Due to the pandemic, participants could not meet in Aarhus as originally planned, but only in virtual space. Nevertheless, the conference was a complete success. At the end of the year, the conference proceedings were published by IOS Press, including the paper “The Morality Menu Project” by Oliver Bendel. From the abstract: “The discipline of machine ethics examines, designs and produces moral machines. The artificial morality is usually pre-programmed by a manufacturer or developer. However, another approach is the more flexible morality menu (MOME). With this, owners or users replicate their own moral preferences onto a machine. A team at the FHNW implemented a MOME for MOBO (a chatbot) in 2019/2020. In this article, the author introduces the idea of the MOME, presents the MOBO-MOME project and discusses advantages and disadvantages of such an approach. It turns out that a morality menu could be a valuable extension for certain moral machines.” The book can be ordered on the publisher’s website. An author’s copy is available here.

The MOML Project

In many cases it is important that an autonomous system acts and reacts adequately from a moral point of view. There are some artifacts of machine ethics, e.g., GOODBOT or LADYBIRD by Oliver Bendel or Nao as a care robot by Susan Leigh and Michael Anderson. But there is no standardization in the field of moral machines yet. The MOML project, initiated by Oliver Bendel, is trying to work in this direction. In the management summary of his bachelor thesis Simon Giller writes: “We present a literature review in the areas of machine ethics and markup languages which shaped the proposed morality markup language (MOML). To overcome the most substantial problem of varying moral concepts, MOML uses the idea of the morality menu. The menu lets humans define moral rules and transfer them to an autonomous system to create a proxy morality. Analysing MOML excerpts allowed us to develop an XML schema which we then tested in a test scenario. The outcome is an XML based morality markup language for autonomous agents. Future projects can use this language or extend it. Using the schema, anyone can write MOML documents and validate them. Finally, we discuss new opportunities, applications and concerns related to the use of MOML. Future work could develop a controlled vocabulary or an ontology defining terms and commands for MOML.” The bachelor thesis will be publicly available in autumn 2020. It was supervised by Dr. Elzbieta Pustulka. There will also be a paper with the results next year.

Towards a Proxy Machine

“Once we place so-called ‘social robots’ into the social practices of our everyday lives and lifeworlds, we create complex, and possibly irreversible, interventions in the physical and semantic spaces of human culture and sociality. The long-term socio-cultural consequences of these interventions is currently impossible to gauge.” (Website Robophilosophy Conference) With these words the next Robophilosophy conference was announced. It would have taken place in Aarhus, Denmark, from 18 to 21 August 2019, but due to the COVID 19 pandemic it is being conducted online. One lecture will be given by Oliver Bendel. The abstract of the paper “The Morality Menu Project” states: “Machine ethics produces moral machines. The machine morality is usually fixed. Another approach is the morality menu (MOME). With this, owners or users transfer their own morality onto the machine, for example a social robot. The machine acts in the same way as they would act, in detail. A team at the School of Business FHNW implemented a MOME for the MOBO chatbot. In this article, the author introduces the idea of the MOME, presents the MOBO-MOME project and discusses advantages and disadvantages of such an approach. It turns out that a morality menu can be a valuable extension for certain moral machines.” In 2018 Hiroshi Ishiguro, Guy Standing, Catelijne Muller, Joanna Bryson, and Oliver Bendel had been keynote speakers. In 2020, Catrin Misselhorn, Selma Sabanovic, and Shannon Vallor will be presenting. More information via conferences.au.dk/robo-philosophy/.

The Birth of the Morality Menu

The idea of a morality menu (MOME) was born in 2018 in the context of machine ethics. It should make it possible to transfer the morality of a person to a machine. On a display you can see different rules of behaviour and you can activate or deactivate them with sliders. Oliver Bendel developed two design studies, one for an animal-friendly vacuum cleaning robot (LADYBIRD), the other for a voicebot like Google Duplex. At the end of 2018, he announced a project at the School of Business FHNW. Three students – Ozan Firat, Levin Padayatty and Yusuf Or – implemented a morality menu for a chatbot called MOBO from June 2019 to January 2020. The user enters personal information and then activates or deactivates nine different rules of conduct. MOBO compliments or does not compliment, responds with or without prejudice, threatens or does not threaten the interlocutor. It responds to each user individually, says his or her name – and addresses him or her formally or informally, depending on the setting. A video of the MOBO-MOME is available here.

Development of a Morality Menu

Machine ethics produces moral and immoral machines. The morality is usually fixed, e.g. by programmed meta-rules and rules. The machine is thus capable of certain actions, not others. However, another approach is the morality menu (MOME for short). With this, the owner or user transfers his or her own morality onto the machine. The machine behaves in the same way as he or she would behave, in detail. Together with his teams, Prof. Dr. Oliver Bendel developed several artifacts of machine ethics at his university from 2013 to 2018. For one of them, he designed a morality menu that has not yet been implemented. Another concept exists for a virtual assistant that can make reservations and orders for its owner more or less independently. In the article “The Morality Menu” the author introduces the idea of the morality menu in the context of two concrete machines. Then he discusses advantages and disadvantages and presents possibilities for improvement. A morality menu can be a valuable extension for certain moral machines. You can download the article here. In 2019, a morality menu for a robot will be developed at the School of Business FHNW.